Our research focuses on developing machine systems that are intuitive and easy for users to operate. To achieve this, we investigate control methodologies and interface designs. One of our key approaches involves utilizing bio-signals such as electroencephalograms (EEG) and electromyograms (EMG), which reflect human activity, to infer user intent and enable machine operation in a manner analogous to natural body movement.

We aim to develop machine systems that are easy for users to operate by investigating control methods and interface technologies. Among these, interfaces utilizing bio-signals such as electroencephalograms (EEG) and electromyograms (EMG) are attracting attention due to their potential applications in assistive devices and the enhancement of conventional controllers. Recent advancements in low-cost signal acquisition devices and signal estimation techniques have accelerated their practical implementation.

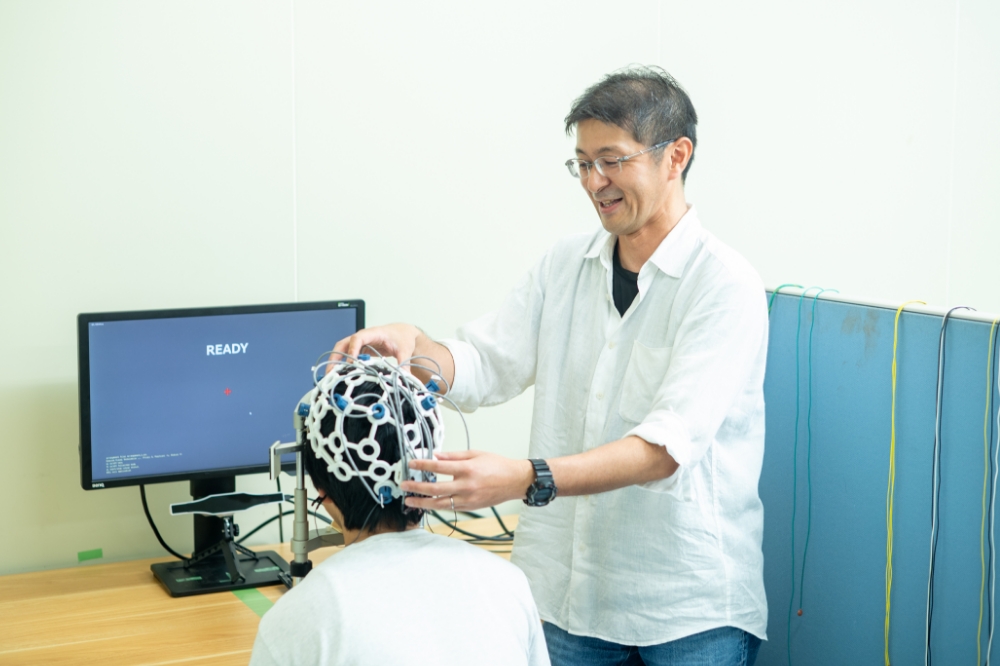

In this study, we focus on developing brain-computer interfaces based on EEG analysis and prosthetic control methods using motor unit signals. These technologies may enable systems that allow users to operate machines as naturally as moving their own bodies, simply by wearing the device.

Interfaces that utilize bio-signals derived from human activity, such as electroencephalograms (EEG) and electromyograms (EMG), enable device operation without physical movement, unlike conventional hand-operated interfaces. These technologies are expected to enhance not only assistive devices but also the functionality of existing controllers. In recent years, the development of low-cost signal acquisition devices and the establishment of signal estimation techniques have accelerated the practical implementation of bio-signal interfaces.

Based on this background, we are considering brain-computer interfaces that utilize novel EEG analysis methods, as well as prosthetic control systems that employ motor unit signals, which represent neural commands for muscle contraction. These advancements may lead to systems that allow users to operate machines as naturally as moving their own bodies, simply by wearing the device.

In our EEG-based interface research, we propose a novel system that utilizes steady-state visual evoked potentials (SSVEPs), which are brain responses triggered by visual stimuli blinking at a constant frequency. Our proposed method utilizes changes in the intensity distribution of brain waves that occur when the gaze position shifts in response to visual stimulus patterns. This enables the identification and estimation of the gaze position from brain waves recorded during single-frequency visual stimulation. This approach has the potential to significantly enhance the spatial resolution of gaze estimation using EEG signals.

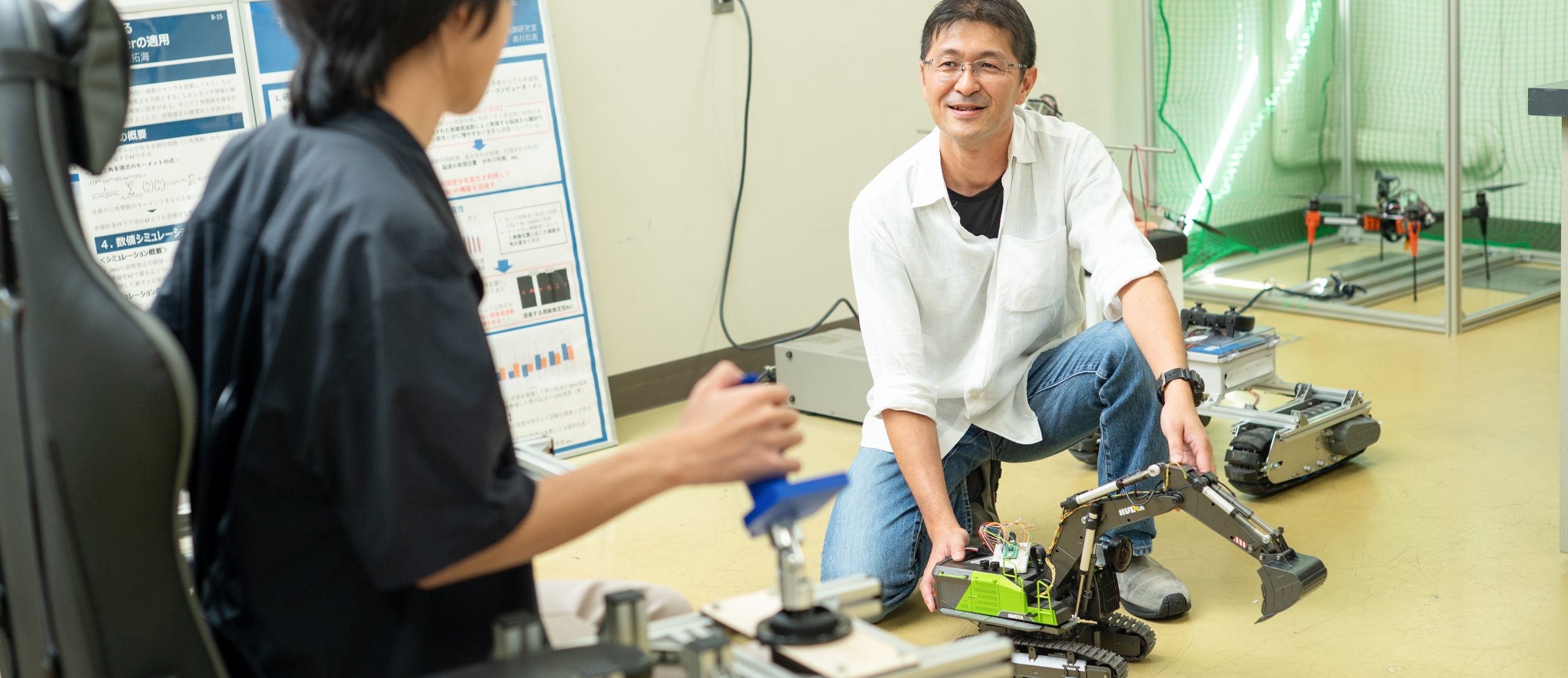

In the study of prosthetic control, rather than directly estimating muscle force or movement from electromyographic (EMG) signals, we adopt a recently established technique that estimates motor unitsвҖ”neural commands for muscle contractionвҖ”from multi-channel EMG recordings. Our goal is to develop a new method for motion state estimation based on motor units and apply it to precise and responsive prosthetic control.

The following applications are anticipated based on our research: non-contact menu selection interfaces and gaze tracking systems utilizing brainwave signals, active prosthetic limbs that can be operated even without residual muscle tissue, and functional enhancements and precision improvements of existing interfaces through the use of bioelectrical signals, among others.

| Research | |

|---|---|

| Journal | Planned Submission |

Learn about the cutting-edge research achievements, advanced technical resources, and know-how that the Graduate School of Engineering at the University of Hyogo can provide. We welcome partners who can create the future together with us by jointly generating new ideas and technologies through collaborative research, commissioned research, and technical consultations.

More